Add NeoRefacer code and updates

This commit is contained in:

31

LICENSE

31

LICENSE

@@ -23,9 +23,9 @@ furnished to do so, subject to the following conditions:

|

||||

- The above copyright notice and this permission notice shall be included in

|

||||

all copies or substantial portions of the Software.

|

||||

|

||||

- You may only use this Software with content (such as images) for which you

|

||||

have the necessary rights and permissions. Unauthorized use of third-party

|

||||

content is strictly prohibited.

|

||||

- You may only use this Software with content (such as images and videos)

|

||||

for which you have the necessary rights and permissions. Unauthorized use of

|

||||

third-party content is strictly prohibited.

|

||||

|

||||

- This Software is intended for educational and research purposes only. Use

|

||||

of this Software for malicious purposes, including but not limited to identity

|

||||

@@ -69,10 +69,33 @@ If you wish to use this project solely under the MIT License (for example,

|

||||

for commercial purposes), you **must remove** the `codeformer` component.

|

||||

Please follow the instructions provided below:

|

||||

|

||||

- Remove the subdirectories basicsr, facelib and weights.

|

||||

- Remove the subdirectories basicsr and facelib

|

||||

- Remove within weights subdirectory Codeformer and facelib.

|

||||

- Remove codeformer_wrapper.py

|

||||

- Edit refacer.py and remove the import: codeformer_wrapper import enhance_image

|

||||

- Within def reface_image, comment the line: output_path = enhance_image(output_path)

|

||||

- That's all!

|

||||

|

||||

Failure to remove `codeformer` when required may violate the terms of its license.

|

||||

|

||||

About User Outputs:

|

||||

The outputs generated by this software (such as refaced images or videos) are not

|

||||

subject to the CC BY-NC-SA license and may be used freely, including for commercial

|

||||

purposes, regardless of whether the optional codeformer component is used.

|

||||

|

||||

Explanation:

|

||||

|

||||

Codeformer is a model that processes images for face enhancement.

|

||||

It does not embed its own visible content into the output.

|

||||

|

||||

This is very different from a case where licensed assets (such as textures,

|

||||

overlays, characters, backgrounds, or artwork) appear visibly in the output.

|

||||

|

||||

Codeformer simply modifies the input image without adding original copyrighted

|

||||

material.

|

||||

|

||||

Therefore, output images are not derivative works of Codeformer

|

||||

and are not bound by the NonCommercial restrictions of its license.

|

||||

|

||||

Only the Codeformer model code and weights themselves are under CC BY-NC-SA 4.0

|

||||

— not the results produced through their use.

|

||||

|

||||

140

README.md

140

README.md

@@ -1,23 +1,63 @@

|

||||

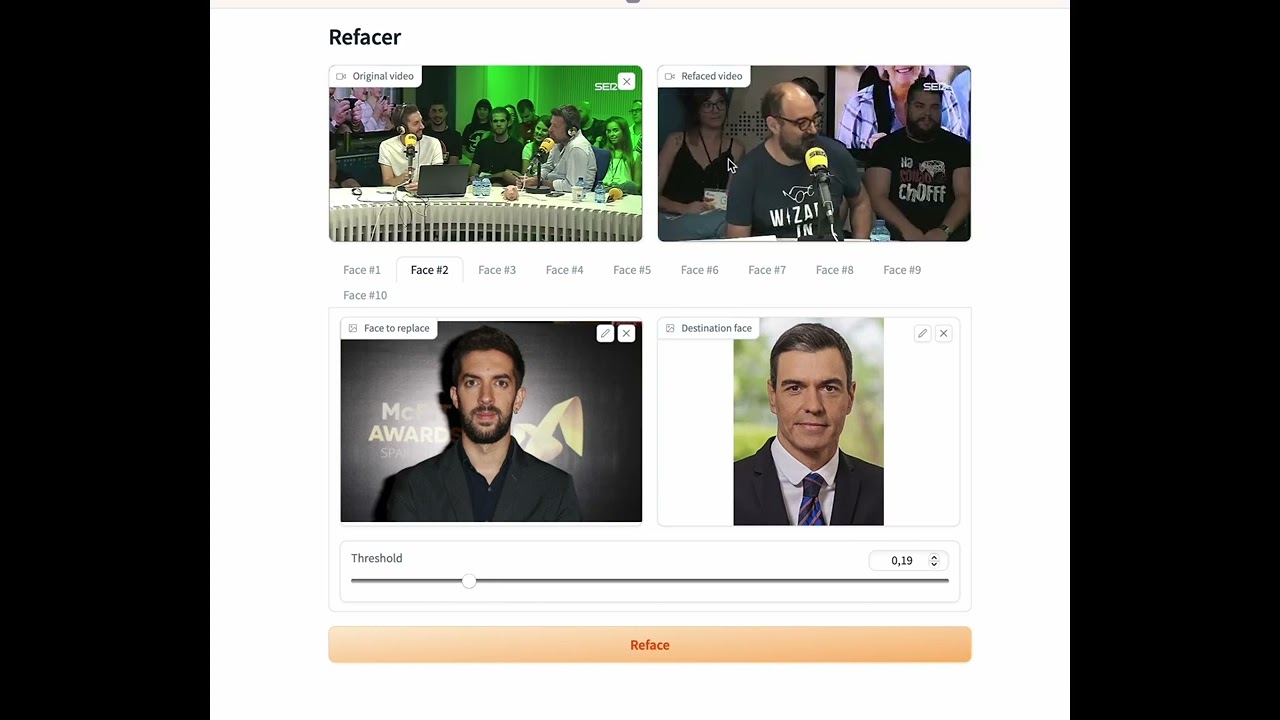

# Refacer: One-Click Deepfake Multi-Face Swap Tool

|

||||

<img src="https://raw.githubusercontent.com/MechasAI/NeoRefacer/main/icon.png"/>

|

||||

|

||||

[](https://colab.research.google.com/github/xaviviro/refacer/blob/master/notebooks/Refacer_colab.ipynb)

|

||||

# NeoRefacer: Images. GIFs. Full-length videos.

|

||||

|

||||

👉 [Watch demo on Youtube](https://youtu.be/mXk1Ox7B244)

|

||||

In a future where identity flows like data and reality is just another layer, NeoRefacer gives you the power to transform.

|

||||

|

||||

Refacer, a simple tool that allows you to create deepfakes with multiple faces with just one click! This project was inspired by [Roop](https://github.com/s0md3v/roop) and is powered by the excellent [Insightface](https://github.com/deepinsight/insightface). Refacer requires no training - just one photo and you're ready to go.

|

||||

Images. GIFs. Full-length videos.

|

||||

|

||||

:warning: Please, before using the code from this repository, make sure to read the [disclaimer](https://github.com/xaviviro/refacer/tree/main#disclaimer).

|

||||

All yours to reface and reimagine - with a single pulse of electricity.

|

||||

|

||||

## Demonstration

|

||||

Evolved from the foundations of the [Refacer](https://github.com/xaviviro/refacer) project, NeoRefacer is a next-generation, fully open-source refacer.

|

||||

|

||||

|

||||

<img src="https://raw.githubusercontent.com/MechasAI/NeoRefacer/main/demo.jpg"/>

|

||||

|

||||

[](https://youtu.be/mXk1Ox7B244)

|

||||

1. Clone the repository.

|

||||

2. Spin up the environment.

|

||||

3. Launch the local interface.

|

||||

4. Control the face of tomorrow.

|

||||

|

||||

[OFFICIAL WEBSITE](https://www.mechas.ai/projects-neorefacer.php)

|

||||

|

||||

## Core DNA of NeoRefacer

|

||||

* **Instant Identity Shift** - Swap faces in images, GIFs, and movies faster than your neural implants can blink.

|

||||

* **Overclocked Engine** - Optimized for CPU rebels and GPU warlords.

|

||||

* **Feature Film Reface** - Not just TikToks. Full two-hour cinematic overthrows.

|

||||

* **Targeted Strike Modes** - Single-face raids, multi-face takeovers, or precision-targeted matchups.

|

||||

* **Bulk Warfare** - Mass-process entire image archives with industrial-scale automation.

|

||||

* **Neural Enhancement Suite** - Automatic image enhancement.

|

||||

|

||||

## Use Cases

|

||||

|

||||

* **Entertainment**: Rewrite memories, remix movies, animate the past.

|

||||

* **Education**: Step into history, speak through new faces.

|

||||

* **Content Creation**: Craft AI doubles, weave digital alter-egos.

|

||||

* **Business/Marketing**: Personalize ads inside the algorithmic flood.

|

||||

* **Niche Fun**: Trace ancestral echoes, forge RPG legends, hijack fame.

|

||||

|

||||

## What's New (Since Refacer)

|

||||

|

||||

* Image, GIF and Video reface modes

|

||||

* Significantly faster processing

|

||||

* Automatic image enhancing (Image mode)

|

||||

* Improved video output quality

|

||||

* Support for videos that have long duration

|

||||

* Preview generation for videos and GIFs (skips 90% of frames)

|

||||

* Multiple replacement modes:

|

||||

* **Single Face** (Fast): all faces are replaced by a single face. Ideal for images, GIFs or videos with a single face

|

||||

* **Multiple Faces** (Fast): faces are replaced by the faces you provide based on their order from left to right

|

||||

* **Faces by Match** (Slower): faces are first detected and replaced by the faces you provide.

|

||||

* Improved GPU detection

|

||||

* Uses local Gradio cache with auto-cleanup on startup

|

||||

* Includes a bulk image refacer utility (refacer_bulk.py)

|

||||

|

||||

NeoRefacer, just like the original Refacer project, requires no training - just one photo and you're ready to go.

|

||||

|

||||

:warning: Please, before using the code from this repository, make sure to read the [LICENSE](https://github.com/MechasAI/NeoRefacer/blob/main/LICENSE).

|

||||

|

||||

## System Compatibility

|

||||

|

||||

Refacer has been thoroughly tested on the following operating systems:

|

||||

NeoRefacer has been tested on the following operating systems:

|

||||

|

||||

| Operating System | CPU Support | GPU Support |

|

||||

| ---------------- | ----------- | ----------- |

|

||||

@@ -29,59 +69,59 @@ The application is compatible with both CPU and GPU (Nvidia CUDA) environments,

|

||||

|

||||

:warning: Please note, we do not recommend using `onnxruntime-silicon` on MacOSX due to an apparent issue with memory management. If you manage to compile `onnxruntime` for Silicon, the program is prepared to use CoreML.

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Ensure that you have `ffmpeg` installed and correctly configured. There are many guides available on the internet to help with this. Here are a few (note: I did not create these guides):

|

||||

|

||||

- [How to Install FFmpeg](https://www.hostinger.com/tutorials/how-to-install-ffmpeg)

|

||||

|

||||

|

||||

## Installation

|

||||

|

||||

Refacer has been tested and is known to work with Python 3.10.9, but it is likely to work with other Python versions as well. It is recommended to use a virtual environment for setting up and running the project to avoid potential conflicts with other Python packages you may have installed.

|

||||

NeoRefacer has been tested and is known to work with Python 3.11.11, but it is likely to work with other Python versions as well. It is recommended to use a virtual environment, such as [Conda](https://www.anaconda.com/download), for setting up and running the project to avoid potential conflicts with other Python packages you may have installed.

|

||||

|

||||

Follow these steps to install Refacer:

|

||||

Follow these steps to install Refacer and its dependencies:

|

||||

|

||||

1. Clone the repository:

|

||||

```bash

|

||||

git clone https://github.com/xaviviro/refacer.git

|

||||

cd refacer

|

||||

```

|

||||

# Check if ffmpeg is available (if not, you might to download it and add it to your PATH)

|

||||

# Windows: download ffmpeg-git-essentials.7z from https://www.gyan.dev/ffmpeg/builds/

|

||||

# Other systems: see a tutorial https://www.hostinger.com/tutorials/how-to-install-ffmpeg

|

||||

ffmpeg

|

||||

|

||||

2. Download the Insightface model:

|

||||

You can manually download the model created by Insightface from this [link](https://huggingface.co/deepinsight/inswapper/resolve/main/inswapper_128.onnx) and add it to the project folder. Alternatively, if you have `wget` installed, you can use the following command:

|

||||

```bash

|

||||

wget --content-disposition https://huggingface.co/deepinsight/inswapper/resolve/main/inswapper_128.onnx

|

||||

```

|

||||

# Clone the repository

|

||||

git clone https://github.com/MechasAI/NeoRefacer.git

|

||||

cd NeoRefacer

|

||||

|

||||

3. Install dependencies:

|

||||

# Windows: Create the environment

|

||||

conda create -n neorefacer-env python=3.11 nomkl conda-forge::vs2015_runtime

|

||||

|

||||

* For CPU (compatible with Windows, MacOSX, and Linux):

|

||||

```bash

|

||||

pip install -r requirements.txt

|

||||

```

|

||||

# Linux: Create the environment

|

||||

conda create -n neorefacer-env python=3.11 nomkl

|

||||

|

||||

* For GPU (compatible with Windows and Linux only, requires a NVIDIA GPU with CUDA and its libraries):

|

||||

```bash

|

||||

# MacOS: Create the environment

|

||||

conda create -n neorefacer-env python=3.11

|

||||

|

||||

# Activate the environment

|

||||

conda activate neorefacer-env

|

||||

|

||||

# Instal the dependencies:

|

||||

# For CPU only (compatible with Windows, MacOSX, and Linux)

|

||||

pip install -r requirements-CPU.txt

|

||||

|

||||

# For NVIDIA RTX GPU only (compatible with Windows and Linux only, requires a NVIDIA GPU with CUDA and its libraries)

|

||||

pip install -r requirements-GPU.txt

|

||||

```

|

||||

|

||||

* For CoreML (compatible with MacOSX, requires Silicon architecture):

|

||||

```bash

|

||||

# For CoreML only (compatible with MacOSX, requires Silicon architecture):

|

||||

pip install -r requirements-COREML.txt

|

||||

```

|

||||

|

||||

For more information on installing the CUDA necessary to use `onnxruntime-gpu`, please refer directly to the official [ONNX Runtime repository](https://github.com/microsoft/onnxruntime/).

|

||||

For NVIDIA GPU, make sure you have both NVIDIA GPU Computing Toolkit and NVIDIA CUDNN installed. The onnxruntime-gpu version must match your version of CUDA. This example uses onnxruntime-gpu 1.21.0, which is compatible with CUDA 12.6 and CUDNN 9.4 - Refacer.py is pre-loading both libraries. Remember to update the paths if needed in refacer.py if you have different location or versions.

|

||||

|

||||

For more details on using the Insightface model, you can refer to their [example](https://github.com/deepinsight/insightface/tree/master/examples/in_swapper).

|

||||

For more information on installing the CUDA necessary to use `onnxruntime-gpu`, please refer directly to the official [ONNX Runtime repository](https://github.com/microsoft/onnxruntime/).

|

||||

|

||||

|

||||

## Usage

|

||||

|

||||

Once you have successfully installed Refacer and its dependencies, you can run the application using the following command:

|

||||

Once you have successfully installed NeoRefacer and its dependencies, you can run the application using the following command:

|

||||

|

||||

```bash

|

||||

python app.py

|

||||

|

||||

# Alternatively, if you need to force CPU mode

|

||||

python app.py --force_cpu

|

||||

```

|

||||

|

||||

Then, open your web browser and navigate to the following address:

|

||||

@@ -90,21 +130,31 @@ Then, open your web browser and navigate to the following address:

|

||||

http://127.0.0.1:7680

|

||||

```

|

||||

|

||||

A bulk refacer utility is also available and can be called using the following command:

|

||||

|

||||

```bash

|

||||

python refacer_bulk.py --input_path ./input --dest_face myface.jpg

|

||||

```

|

||||

|

||||

|

||||

## Questions?

|

||||

|

||||

If you have any questions or issues, feel free to [open an issue](https://github.com/xaviviro/refacer/issues/new) or submit a pull request.

|

||||

If you have any questions or issues, feel free to [open an issue](https://github.com/MechasAI/NeoRefacer/issues/new).

|

||||

|

||||

|

||||

## Recognition Module

|

||||

## Third-Party Modules

|

||||

|

||||

The `recognition` folder in this repository is derived from Insightface's GitHub repository. You can find the original source code here: [Insightface Recognition Source Code](https://github.com/deepinsight/insightface/tree/master/web-demos/src_recognition)

|

||||

|

||||

This module is used for recognizing and handling face data within the Refacer application, enabling its powerful deepfake capabilities. We are grateful to Insightface for their work and for making their code available.

|

||||

This module is used for recognizing and handling face data within the NeoRefacer application. We are grateful to Insightface for their work and for making their code available.

|

||||

|

||||

The image enhancing capability is based on [codeformer](https://github.com/felipedaragon/codeformer/) (by Shangchen Zhou) and [BasicSR](https://github.com/XPixelGroup/BasicSR). It also borrow some codes from [Unleashing Transformers](https://github.com/samb-t/unleashing-transformers), [YOLOv5-face](https://github.com/deepcam-cn/yolov5-face), and [FaceXLib](https://github.com/xinntao/facexlib). Thanks for their awesome works.

|

||||

|

||||

## License

|

||||

|

||||

Note: This project uses a Custom MIT License. See LICENSE for full terms.

|

||||

Note: This project uses a Custom MIT License, not allowing commercial use of the code unless you remove the image enhancing component. The output (refaced image or video) is not restricted by CC BY-NC-SA and may be used including for commercial purposes. See [LICENSE](https://github.com/MechasAI/NeoRefacer/blob/main/LICENSE) for full terms.

|

||||

|

||||

The generated content does not represent the views, beliefs, or attitudes of the authors of this Software. Please use the Software and its outputs responsibly, ethically, and with respect toward others.

|

||||

The generated content (refaced images or videos) does not represent the views, beliefs, or attitudes of the authors of this Software. Please use the Software and its outputs responsibly, ethically, and with respect toward others.

|

||||

|

||||

## Credits

|

||||

Special thanks to Roberto Marc for the additional testing.

|

||||

|

||||

366

app.py

366

app.py

@@ -1,93 +1,339 @@

|

||||

import os

|

||||

os.environ["KMP_DUPLICATE_LIB_OK"] = "TRUE"

|

||||

|

||||

import gradio as gr

|

||||

from refacer import Refacer

|

||||

import argparse

|

||||

import ngrok

|

||||

import imageio

|

||||

import numpy as np

|

||||

from PIL import Image

|

||||

import tempfile

|

||||

import base64

|

||||

import pyfiglet

|

||||

import shutil

|

||||

import time

|

||||

|

||||

print("\033[94m" + pyfiglet.Figlet(font='slant').renderText("NeoRefacer") + "\033[0m")

|

||||

|

||||

def cleanup_temp(folder_path):

|

||||

try:

|

||||

shutil.rmtree(folder_path)

|

||||

print("Gradio cache cleared successfully.")

|

||||

except Exception as e:

|

||||

print(f"Error: {e}")

|

||||

|

||||

# Prepare temp folder

|

||||

os.environ["GRADIO_TEMP_DIR"] = "./tmp"

|

||||

if os.path.exists("./tmp"):

|

||||

cleanup_temp(os.environ['GRADIO_TEMP_DIR'])

|

||||

if not os.path.exists("./tmp"):

|

||||

os.makedirs("./tmp")

|

||||

|

||||

# Parse arguments

|

||||

parser = argparse.ArgumentParser(description='Refacer')

|

||||

parser.add_argument("--max_num_faces", type=int, help="Max number of faces on UI", default=5)

|

||||

parser.add_argument("--force_cpu", help="Force CPU mode", default=False, action="store_true")

|

||||

parser.add_argument("--share_gradio", help="Share Gradio", default=False, action="store_true")

|

||||

parser.add_argument("--server_name", type=str, help="Server IP address", default="127.0.0.1")

|

||||

parser.add_argument("--server_port", type=int, help="Server port", default=7860)

|

||||

parser.add_argument("--colab_performance", help="Use in colab for better performance", default=False,action="store_true")

|

||||

parser.add_argument("--ngrok", type=str, help="Use ngrok", default=None)

|

||||

parser.add_argument("--ngrok_region", type=str, help="ngrok region", default="us")

|

||||

parser.add_argument("--max_num_faces", type=int, default=8)

|

||||

parser.add_argument("--force_cpu", default=False, action="store_true")

|

||||

parser.add_argument("--share_gradio", default=False, action="store_true")

|

||||

parser.add_argument("--server_name", type=str, default="127.0.0.1")

|

||||

parser.add_argument("--server_port", type=int, default=7860)

|

||||

parser.add_argument("--colab_performance", default=False, action="store_true")

|

||||

parser.add_argument("--ngrok", type=str, default=None)

|

||||

parser.add_argument("--ngrok_region", type=str, default="us")

|

||||

args = parser.parse_args()

|

||||

|

||||

# Initialize

|

||||

refacer = Refacer(force_cpu=args.force_cpu, colab_performance=args.colab_performance)

|

||||

|

||||

num_faces = args.max_num_faces

|

||||

|

||||

# Connect to ngrok for ingress

|

||||

def connect(token, port, options):

|

||||

account = None

|

||||

if token is None:

|

||||

token = 'None'

|

||||

else:

|

||||

if ':' in token:

|

||||

# token = authtoken:username:password

|

||||

token, username, password = token.split(':', 2)

|

||||

account = f"{username}:{password}"

|

||||

def create_dummy_image():

|

||||

dummy = Image.new('RGB', (1, 1), color=(255, 255, 255))

|

||||

temp_file = tempfile.NamedTemporaryFile(delete=False, suffix=".png")

|

||||

dummy.save(temp_file.name)

|

||||

return temp_file.name

|

||||

|

||||

# For all options see: https://github.com/ngrok/ngrok-py/blob/main/examples/ngrok-connect-full.py

|

||||

if not options.get('authtoken_from_env'):

|

||||

options['authtoken'] = token

|

||||

if account:

|

||||

options['basic_auth'] = account

|

||||

def run_image(*vars):

|

||||

image_path = vars[0]

|

||||

origins = vars[1:(num_faces+1)]

|

||||

destinations = vars[(num_faces+1):(num_faces*2)+1]

|

||||

thresholds = vars[(num_faces*2)+1:-1]

|

||||

face_mode = vars[-1]

|

||||

|

||||

disable_similarity = (face_mode in ["Single Face", "Multiple Faces"])

|

||||

multiple_faces_mode = (face_mode == "Multiple Faces")

|

||||

|

||||

try:

|

||||

public_url = ngrok.connect(f"127.0.0.1:{port}", **options).url()

|

||||

except Exception as e:

|

||||

print(f'Invalid ngrok authtoken? ngrok connection aborted due to: {e}\n'

|

||||

f'Your token: {token}, get the right one on https://dashboard.ngrok.com/get-started/your-authtoken')

|

||||

else:

|

||||

print(f'ngrok connected to localhost:{port}! URL: {public_url}\n'

|

||||

'You can use this link after the launch is complete.')

|

||||

faces = []

|

||||

for k in range(num_faces):

|

||||

if destinations[k] is not None:

|

||||

faces.append({

|

||||

'origin': origins[k] if not multiple_faces_mode else None,

|

||||

'destination': destinations[k],

|

||||

'threshold': thresholds[k] if not multiple_faces_mode else 0.0

|

||||

})

|

||||

|

||||

return refacer.reface_image(image_path, faces, disable_similarity=disable_similarity, multiple_faces_mode=multiple_faces_mode)

|

||||

|

||||

def run(*vars):

|

||||

video_path = vars[0]

|

||||

origins = vars[1:(num_faces+1)]

|

||||

destinations = vars[(num_faces+1):(num_faces*2)+1]

|

||||

thresholds=vars[(num_faces*2)+1:]

|

||||

thresholds = vars[(num_faces*2)+1:-2]

|

||||

preview = vars[-2]

|

||||

face_mode = vars[-1]

|

||||

|

||||

disable_similarity = (face_mode in ["Single Face", "Multiple Faces"])

|

||||

multiple_faces_mode = (face_mode == "Multiple Faces")

|

||||

|

||||

faces = []

|

||||

for k in range(0,num_faces):

|

||||

if origins[k] is not None and destinations[k] is not None:

|

||||

for k in range(num_faces):

|

||||

if destinations[k] is not None:

|

||||

faces.append({

|

||||

'origin':origins[k],

|

||||

'origin': origins[k] if not multiple_faces_mode else None,

|

||||

'destination': destinations[k],

|

||||

'threshold':thresholds[k]

|

||||

'threshold': thresholds[k] if not multiple_faces_mode else 0.0

|

||||

})

|

||||

|

||||

return refacer.reface(video_path,faces)

|

||||

mp4_path, gif_path = refacer.reface(video_path, faces, preview=preview, disable_similarity=disable_similarity, multiple_faces_mode=multiple_faces_mode)

|

||||

return mp4_path, gif_path if gif_path else None

|

||||

|

||||

origin = []

|

||||

destination = []

|

||||

thresholds = []

|

||||

def load_first_frame(filepath):

|

||||

if filepath is None:

|

||||

return None

|

||||

frames = imageio.get_reader(filepath)

|

||||

return frames.get_data(0)

|

||||

|

||||

with gr.Blocks() as demo:

|

||||

with gr.Row():

|

||||

gr.Markdown("# Refacer")

|

||||

with gr.Row():

|

||||

video=gr.Video(label="Original video",format="mp4")

|

||||

video2=gr.Video(label="Refaced video",interactive=False,format="mp4")

|

||||

def extract_faces_auto(filepath, refacer_instance, max_faces=5, isvideo=False):

|

||||

if filepath is None:

|

||||

return [None] * max_faces

|

||||

|

||||

for i in range(0,num_faces):

|

||||

with gr.Tab(f"Face #{i+1}"):

|

||||

with gr.Row():

|

||||

origin.append(gr.Image(label="Face to replace"))

|

||||

destination.append(gr.Image(label="Destination face"))

|

||||

with gr.Row():

|

||||

thresholds.append(gr.Slider(label="Threshold",minimum=0.0,maximum=1.0,value=0.2))

|

||||

with gr.Row():

|

||||

button=gr.Button("Reface", variant="primary")

|

||||

# Check if video

|

||||

if isvideo:

|

||||

if os.path.getsize(filepath) > 5 * 1024 * 1024: # larger than 5MB

|

||||

print("Video too large for auto-extract, skipping face extraction.")

|

||||

return [None] * max_faces

|

||||

|

||||

button.click(fn=run,inputs=[video]+origin+destination+thresholds,outputs=[video2])

|

||||

frame = load_first_frame(filepath)

|

||||

if frame is None:

|

||||

return [None] * max_faces

|

||||

|

||||

# Create manual temp image inside ./tmp

|

||||

temp_image_path = os.path.join("./tmp", f"temp_face_extract_{int(time.time() * 1000)}.png")

|

||||

Image.fromarray(frame).save(temp_image_path)

|

||||

|

||||

try:

|

||||

faces = refacer_instance.extract_faces_from_image(temp_image_path, max_faces=max_faces)

|

||||

output_faces = faces + [None] * (max_faces - len(faces))

|

||||

return output_faces

|

||||

finally:

|

||||

if os.path.exists(temp_image_path):

|

||||

try:

|

||||

os.remove(temp_image_path)

|

||||

except Exception as e:

|

||||

print(f"Warning: Could not delete temp file {temp_image_path}: {e}")

|

||||

|

||||

def toggle_tabs_and_faces(mode, face_tabs, origin_faces):

|

||||

if mode == "Single Face":

|

||||

tab_updates = [gr.update(visible=(i == 0)) for i in range(len(face_tabs))]

|

||||

origin_updates = [gr.update(visible=False) for _ in range(len(origin_faces))]

|

||||

elif mode == "Multiple Faces":

|

||||

tab_updates = [gr.update(visible=True) for _ in range(len(face_tabs))]

|

||||

origin_updates = [gr.update(visible=False) for _ in range(len(origin_faces))]

|

||||

else:

|

||||

tab_updates = [gr.update(visible=True) for _ in range(len(face_tabs))]

|

||||

origin_updates = [gr.update(visible=True) for _ in range(len(origin_faces))]

|

||||

return tab_updates + origin_updates

|

||||

|

||||

# --- UI ---

|

||||

|

||||

theme = gr.themes.Base(primary_hue="blue", secondary_hue="cyan")

|

||||

|

||||

with gr.Blocks(theme=theme, title="NeoRefacer - AI Refacer") as demo:

|

||||

with open("icon.png", "rb") as f:

|

||||

icon_data = base64.b64encode(f.read()).decode()

|

||||

icon_html = f'<img src="data:image/png;base64,{icon_data}" style="width:40px;height:40px;margin-right:10px;">'

|

||||

|

||||

with gr.Row():

|

||||

gr.Markdown(f"""

|

||||

<div style="display: flex; align-items: center;">

|

||||

{icon_html}

|

||||

<span style="font-size: 2em; font-weight: bold; color:#2563eb;">NeoRefacer</span>

|

||||

</div>

|

||||

""")

|

||||

|

||||

# --- IMAGE MODE ---

|

||||

with gr.Tab("Image Mode"):

|

||||

with gr.Row():

|

||||

image_input = gr.Image(label="Original image", type="filepath")

|

||||

image_output = gr.Image(label="Refaced image", interactive=False, type="filepath")

|

||||

|

||||

with gr.Row():

|

||||

face_mode_image = gr.Radio(

|

||||

choices=["Single Face", "Multiple Faces", "Faces By Match"],

|

||||

value="Single Face",

|

||||

label="Replacement Mode"

|

||||

)

|

||||

image_btn = gr.Button("Reface Image", variant="primary")

|

||||

|

||||

origin_image, destination_image, thresholds_image, face_tabs_image = [], [], [], []

|

||||

|

||||

for i in range(num_faces):

|

||||

with gr.Tab(f"Face #{i+1}") as tab:

|

||||

with gr.Row():

|

||||

origin = gr.Image(label="Face to replace")

|

||||

destination = gr.Image(label="Destination face")

|

||||

threshold = gr.Slider(label="Threshold", minimum=0.0, maximum=1.0, value=0.2)

|

||||

origin_image.append(origin)

|

||||

destination_image.append(destination)

|

||||

thresholds_image.append(threshold)

|

||||

face_tabs_image.append(tab)

|

||||

|

||||

face_mode_image.change(

|

||||

fn=lambda mode: toggle_tabs_and_faces(mode, face_tabs_image, origin_image),

|

||||

inputs=[face_mode_image],

|

||||

outputs=face_tabs_image + origin_image

|

||||

)

|

||||

|

||||

demo.load(

|

||||

fn=lambda: toggle_tabs_and_faces("Single Face", face_tabs_image, origin_image),

|

||||

inputs=None,

|

||||

outputs=face_tabs_image + origin_image

|

||||

)

|

||||

|

||||

image_btn.click(

|

||||

fn=run_image,

|

||||

inputs=[image_input] + origin_image + destination_image + thresholds_image + [face_mode_image],

|

||||

outputs=[image_output]

|

||||

)

|

||||

|

||||

image_input.change(

|

||||

fn=lambda filepath: extract_faces_auto(filepath, refacer, max_faces=num_faces),

|

||||

inputs=image_input,

|

||||

outputs=origin_image

|

||||

)

|

||||

|

||||

# --- GIF MODE ---

|

||||

with gr.Tab("GIF Mode"):

|

||||

with gr.Row():

|

||||

gif_input = gr.File(label="Original GIF", file_types=[".gif"])

|

||||

gif_preview = gr.Video(label="GIF Preview", interactive=False)

|

||||

gif_output = gr.Video(label="Refaced GIF (MP4)", interactive=False, format="mp4")

|

||||

gif_file_output = gr.Image(label="Refaced GIF (GIF)", type="filepath")

|

||||

|

||||

with gr.Row():

|

||||

face_mode_gif = gr.Radio(

|

||||

choices=["Single Face", "Multiple Faces", "Faces By Match"],

|

||||

value="Single Face",

|

||||

label="Replacement Mode"

|

||||

)

|

||||

gif_btn = gr.Button("Reface GIF", variant="primary")

|

||||

|

||||

preview_checkbox_gif = gr.Checkbox(label="Preview Generation (skip 90% of frames)", value=False)

|

||||

|

||||

origin_gif, destination_gif, thresholds_gif, face_tabs_gif = [], [], [], []

|

||||

|

||||

for i in range(num_faces):

|

||||

with gr.Tab(f"Face #{i+1}") as tab:

|

||||

with gr.Row():

|

||||

origin = gr.Image(label="Face to replace")

|

||||

destination = gr.Image(label="Destination face")

|

||||

threshold = gr.Slider(label="Threshold", minimum=0.0, maximum=1.0, value=0.2)

|

||||

origin_gif.append(origin)

|

||||

destination_gif.append(destination)

|

||||

thresholds_gif.append(threshold)

|

||||

face_tabs_gif.append(tab)

|

||||

|

||||

face_mode_gif.change(

|

||||

fn=lambda mode: toggle_tabs_and_faces(mode, face_tabs_gif, origin_gif),

|

||||

inputs=[face_mode_gif],

|

||||

outputs=face_tabs_gif + origin_gif

|

||||

)

|

||||

|

||||

demo.load(

|

||||

fn=lambda: toggle_tabs_and_faces("Single Face", face_tabs_gif, origin_gif),

|

||||

inputs=None,

|

||||

outputs=face_tabs_gif + origin_gif

|

||||

)

|

||||

|

||||

gif_btn.click(

|

||||

fn=lambda *args: run(*args),

|

||||

inputs=[gif_input] + origin_gif + destination_gif + thresholds_gif + [preview_checkbox_gif, face_mode_gif],

|

||||

outputs=[gif_output, gif_file_output]

|

||||

)

|

||||

|

||||

gif_input.change(

|

||||

fn=lambda filepath: extract_faces_auto(filepath, refacer, max_faces=num_faces),

|

||||

inputs=gif_input,

|

||||

outputs=origin_gif

|

||||

)

|

||||

gif_input.change(

|

||||

fn=lambda file: file,

|

||||

inputs=gif_input,

|

||||

outputs=[gif_preview]

|

||||

)

|

||||

|

||||

# --- VIDEO MODE ---

|

||||

with gr.Tab("Video Mode"):

|

||||

with gr.Row():

|

||||

video_input = gr.Video(label="Original video", format="mp4")

|

||||

video_output = gr.Video(label="Refaced Video", interactive=False, format="mp4")

|

||||

|

||||

with gr.Row():

|

||||

face_mode_video = gr.Radio(

|

||||

choices=["Single Face", "Multiple Faces", "Faces By Match"],

|

||||

value="Single Face",

|

||||

label="Replacement Mode"

|

||||

)

|

||||

video_btn = gr.Button("Reface Video", variant="primary")

|

||||

|

||||

preview_checkbox_video = gr.Checkbox(label="Preview Generation (skip 90% of frames)", value=False)

|

||||

|

||||

origin_video, destination_video, thresholds_video, face_tabs_video = [], [], [], []

|

||||

|

||||

for i in range(num_faces):

|

||||

with gr.Tab(f"Face #{i+1}") as tab:

|

||||

with gr.Row():

|

||||

origin = gr.Image(label="Face to replace")

|

||||

destination = gr.Image(label="Destination face")

|

||||

threshold = gr.Slider(label="Threshold", minimum=0.0, maximum=1.0, value=0.2)

|

||||

origin_video.append(origin)

|

||||

destination_video.append(destination)

|

||||

thresholds_video.append(threshold)

|

||||

face_tabs_video.append(tab)

|

||||

|

||||

face_mode_video.change(

|

||||

fn=lambda mode: toggle_tabs_and_faces(mode, face_tabs_video, origin_video),

|

||||

inputs=[face_mode_video],

|

||||

outputs=face_tabs_video + origin_video

|

||||

)

|

||||

|

||||

demo.load(

|

||||

fn=lambda: toggle_tabs_and_faces("Single Face", face_tabs_video, origin_video),

|

||||

inputs=None,

|

||||

outputs=face_tabs_video + origin_video

|

||||

)

|

||||

|

||||

video_input.change(

|

||||

fn=lambda filepath: extract_faces_auto(filepath, refacer, max_faces=num_faces, isvideo=True),

|

||||

inputs=video_input,

|

||||

outputs=origin_video

|

||||

)

|

||||

|

||||

video_btn.click(

|

||||

fn=lambda *args: run(*args),

|

||||

inputs=[video_input] + origin_video + destination_video + thresholds_video + [preview_checkbox_video, face_mode_video],

|

||||

outputs=[video_output, gr.File(visible=False)]

|

||||

)

|

||||

|

||||

# --- ngrok connect (optional) ---

|

||||

if args.ngrok:

|

||||

def connect(token, port, options):

|

||||

try:

|

||||

public_url = ngrok.connect(f"127.0.0.1:{port}", **options).url()

|

||||

print(f'ngrok URL: {public_url}')

|

||||

except Exception as e:

|

||||

print(f'ngrok connection aborted: {e}')

|

||||

|

||||

if args.ngrok is not None:

|

||||

connect(args.ngrok, args.server_port, {'region': args.ngrok_region, 'authtoken_from_env': False})

|

||||

|

||||

#demo.launch(share=True,server_name="0.0.0.0", show_error=True)

|

||||

demo.queue().launch(show_error=True,share=args.share_gradio,server_name=args.server_name,server_port=args.server_port)

|

||||

# --- Launch app ---

|

||||

demo.queue().launch(favicon_path="icon.png", show_error=True, share=args.share_gradio, server_name=args.server_name, server_port=args.server_port)

|

||||

|

||||

@@ -1,20 +0,0 @@

|

||||

FROM nvidia/cuda:11.8.0-cudnn8-runtime-ubuntu22.04

|

||||

|

||||

# Always use UTC on a server

|

||||

RUN ln -snf /usr/share/zoneinfo/UTC /etc/localtime && echo UTC > /etc/timezone

|

||||

|

||||

RUN DEBIAN_FRONTEND=noninteractive apt update && apt install -y python3 python3-pip python3-tk git ffmpeg nvidia-cuda-toolkit nvidia-container-runtime libnvidia-decode-525-server wget unzip

|

||||

RUN wget https://github.com/deepinsight/insightface/releases/download/v0.7/buffalo_l.zip -O /tmp/buffalo_l.zip && \

|

||||

mkdir -p /root/.insightface/models/buffalo_l && \

|

||||

cd /root/.insightface/models/buffalo_l && \

|

||||

unzip /tmp/buffalo_l.zip && \

|

||||

rm -f /tmp/buffalo_l.zip

|

||||

|

||||

RUN pip install nvidia-tensorrt

|

||||

RUN git clone https://github.com/xaviviro/refacer && cd refacer && pip install -r requirements-GPU.txt

|

||||

|

||||

WORKDIR /refacer

|

||||

|

||||

# Test following commands in container to make sure GPU stuff works

|

||||

# nvidia-smi

|

||||

# python3 -c "import tensorflow as tf; print(tf.config.list_physical_devices('GPU'))"

|

||||

@@ -1,13 +0,0 @@

|

||||

#!/bin/bash

|

||||

# Run this script from within the refacer/docker folder.

|

||||

# You'll need inswrapper_128.onnx from either:

|

||||

# https://drive.google.com/file/d/1eu60OrRtn4WhKrzM4mQv4F3rIuyUXqfl/view?usp=drive_link

|

||||

# or https://drive.google.com/file/d/1jbDUGrADco9A1MutWjO6d_1dwizh9w9P/view?usp=sharing

|

||||

# or https://mega.nz/file/9l8mGDJA#FnPxHwpdhDovDo6OvbQjhHd2nDAk8_iVEgo3mpHLG6U

|

||||

# or https://1drv.ms/u/s!AsHA3Xbnj6uAgxhb_tmQ7egHACOR?e=CPoThO

|

||||

# or https://civitai.com/models/80324?modelVersionId=85159

|

||||

|

||||

docker stop -t 0 refacer

|

||||

docker build -t refacer -f Dockerfile.nvidia . && \

|

||||

docker run --rm --name refacer -v $(pwd)/..:/refacer -p 7860:7860 --gpus all refacer python3 app.py --server_name 0.0.0.0 &

|

||||

sleep 2 && google-chrome --new-window "http://127.0.0.1:7860" &

|

||||

@@ -1,65 +0,0 @@

|

||||

{

|

||||

"cells": [

|

||||

{

|

||||

"attachments": {},

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "ghPlUjrD_xmd"

|

||||

},

|

||||

"source": [

|

||||

"# Refacer\n",

|

||||

"\n",

|

||||

"[](https://colab.research.google.com/github/xaviviro/refacer/blob/master/notebooks/Refacer_colab.ipynb)\n",

|

||||

"\n",

|

||||

"[Refacer](https://github.com/xaviviro/refacer) is an amazing tool that allows you to create deepfakes with multiple faces, giving you the option to choose which face to replace, all in one click!\n",

|

||||

"\n",

|

||||

"If you find Refacer helpful, consider giving it a star on [GitHub](https://github.com/xaviviro/refacer) Your support helps to keep the project going!\n",

|

||||

"\n",

|

||||

"Before using this Colab or the Refacer tool, please make sure to read the [Disclaimer](https://github.com/xaviviro/refacer#disclaimer) in the GitHub repository. It's very important to understand the terms of use, and the ethical implications of creating deepfakes.\n",

|

||||

"\n",

|

||||

"In this Colab, you'll be able to try out Refacer without needing to install anything on your own machine. Enjoy!\n",

|

||||

"\n",

|

||||

"*If you encounter any issues or have any suggestions, feel free to [open an issue](https://github.com/xaviviro/refacer/issues/new) on the GitHub repository.*"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"colab": {

|

||||

"base_uri": "https://localhost:8080/"

|

||||

},

|

||||

"id": "r-vlpYRr_6W7",

|

||||

"outputId": "2f2ba046-082f-422c-a391-3d6991276830"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!pip uninstall numpy -y -q\n",

|

||||

"!pip install --disable-pip-version-check --root-user-action=ignore ngrok numpy==1.24.3 onnxruntime-gpu gradio insightface==0.7.3 ffmpeg_python opencv_python -q --force\n",

|

||||

"\n",

|

||||

"!git clone https://github.com/xaviviro/refacer.git\n",

|

||||

"%cd refacer\n",

|

||||

"\n",

|

||||

"!wget --content-disposition \"https://huggingface.co/deepinsight/inswapper/resolve/main/inswapper_128.onnx\"\n",

|

||||

"\n",

|

||||

"!python app.py --share_gradio --colab_performance\n"

|

||||

]

|

||||

}

|

||||

],

|

||||

"metadata": {

|

||||

"accelerator": "GPU",

|

||||

"colab": {

|

||||

"machine_shape": "hm",

|

||||

"provenance": []

|

||||

},

|

||||

"kernelspec": {

|

||||

"display_name": "Python 3",

|

||||

"name": "python3"

|

||||

},

|

||||

"language_info": {

|

||||

"name": "python"

|

||||

}

|

||||

},

|

||||

"nbformat": 4,

|

||||

"nbformat_minor": 0

|

||||

}

|

||||

302

refacer.py

302

refacer.py

@@ -1,13 +1,12 @@

|

||||

import cv2

|

||||

import onnxruntime as rt

|

||||

import sys

|

||||

from insightface.app import FaceAnalysis

|

||||

sys.path.insert(1, './recognition')

|

||||

from scrfd import SCRFD

|

||||

from arcface_onnx import ArcFaceONNX

|

||||

import os.path as osp

|

||||

import os

|

||||

from pathlib import Path

|

||||

import requests

|

||||

from tqdm import tqdm

|

||||

import ffmpeg

|

||||

import random

|

||||

@@ -20,85 +19,135 @@ from insightface.app.common import Face

|

||||

from insightface.utils.storage import ensure_available

|

||||

import re

|

||||

import subprocess

|

||||

from PIL import Image

|

||||

import numpy as np

|

||||

import time

|

||||

from codeformer_wrapper import enhance_image

|

||||

import tempfile

|

||||

|

||||

gc = __import__('gc')

|

||||

|

||||

# Preload NVIDIA DLLs if Windows

|

||||

if sys.platform in ("win32", "win64"):

|

||||

if hasattr(os, "add_dll_directory"):

|

||||

os.add_dll_directory(r"C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v12.6\bin")

|

||||

os.add_dll_directory(r"C:\Program Files\NVIDIA\CUDNN\v9.4\bin\12.6")

|

||||

|

||||

if hasattr(rt, "preload_dlls"):

|

||||

rt.preload_dlls()

|

||||

|

||||

class RefacerMode(Enum):

|

||||

CPU, CUDA, COREML, TENSORRT = range(1, 5)

|

||||

|

||||

class Refacer:

|

||||

def __init__(self, force_cpu=False, colab_performance=False):

|

||||

self.disable_similarity = False

|

||||

self.multiple_faces_mode = False

|

||||

self.first_face = False

|

||||

self.force_cpu = force_cpu

|

||||

self.colab_performance = colab_performance

|

||||

self.use_num_cpus = mp.cpu_count()

|

||||

self.__check_encoders()

|

||||

self.__check_providers()

|

||||

self.total_mem = psutil.virtual_memory().total

|

||||

self.__init_apps()

|

||||

|

||||

def __download_with_progress(self, url, output_path):

|

||||

response = requests.get(url, stream=True)

|

||||

total_size = int(response.headers.get('content-length', 0))

|

||||

block_size = 1024

|

||||

t = tqdm(total=total_size, unit='iB', unit_scale=True, desc=f"Downloading {os.path.basename(output_path)}")

|

||||

|

||||

with open(output_path, 'wb') as f:

|

||||

for data in response.iter_content(block_size):

|

||||

t.update(len(data))

|

||||

f.write(data)

|

||||

t.close()

|

||||

|

||||

if total_size != 0 and t.n != total_size:

|

||||

raise Exception("ERROR, something went wrong downloading the model!")

|

||||

|

||||

def __check_providers(self):

|

||||

if self.force_cpu:

|

||||

self.providers = ['CPUExecutionProvider']

|

||||

else:

|

||||

self.providers = rt.get_available_providers()

|

||||

self.providers = ['CUDAExecutionProvider', 'CPUExecutionProvider']

|

||||

|

||||

rt.set_default_logger_severity(4)

|

||||

self.sess_options = rt.SessionOptions()

|

||||

self.sess_options.execution_mode = rt.ExecutionMode.ORT_SEQUENTIAL

|

||||

self.sess_options.graph_optimization_level = rt.GraphOptimizationLevel.ORT_ENABLE_ALL

|

||||

|

||||

if len(self.providers) == 1 and 'CPUExecutionProvider' in self.providers:

|

||||

if 'CPUExecutionProvider' in self.providers:

|

||||

self.mode = RefacerMode.CPU

|

||||

self.use_num_cpus = mp.cpu_count() - 1

|

||||

self.sess_options.intra_op_num_threads = int(self.use_num_cpus / 3)

|

||||

print(f"CPU mode with providers {self.providers}")

|

||||

elif self.colab_performance:

|

||||

self.mode = RefacerMode.TENSORRT

|

||||

self.use_num_cpus = mp.cpu_count() - 1

|

||||

self.sess_options.intra_op_num_threads = int(self.use_num_cpus / 3)

|

||||

print(f"TENSORRT mode with providers {self.providers}")

|

||||

elif 'CoreMLExecutionProvider' in self.providers:

|

||||

self.mode = RefacerMode.COREML

|

||||

self.use_num_cpus = mp.cpu_count() - 1

|

||||

self.sess_options.intra_op_num_threads = int(self.use_num_cpus / 3)

|

||||

print(f"CoreML mode with providers {self.providers}")

|

||||

elif 'CUDAExecutionProvider' in self.providers:

|

||||

else:

|

||||

self.mode = RefacerMode.CUDA

|

||||

self.use_num_cpus = 2

|

||||

self.sess_options.intra_op_num_threads = 1

|

||||

if 'TensorrtExecutionProvider' in self.providers:

|

||||

self.providers.remove('TensorrtExecutionProvider')

|

||||

print(f"CUDA mode with providers {self.providers}")

|

||||

"""

|

||||

elif 'TensorrtExecutionProvider' in self.providers:

|

||||

self.mode = RefacerMode.TENSORRT

|

||||

#self.use_num_cpus = 1

|

||||

#self.sess_options.intra_op_num_threads = 1

|

||||

self.use_num_cpus = mp.cpu_count()-1

|

||||

self.sess_options.intra_op_num_threads = int(self.use_num_cpus/3)

|

||||

print(f"TENSORRT mode with providers {self.providers}")

|

||||

"""

|

||||

|

||||

print(f"Using providers: {self.providers}")

|

||||

print(f"Mode: {self.mode}")

|

||||

|

||||

def __init_apps(self):

|

||||

assets_dir = ensure_available('models', 'buffalo_l', root='~/.insightface')

|

||||

|

||||

model_path = os.path.join(assets_dir, 'det_10g.onnx')

|

||||

sess_face = rt.InferenceSession(model_path, self.sess_options, providers=self.providers)

|

||||

print(f"Face Detector providers: {sess_face.get_providers()}")

|

||||

self.face_detector = SCRFD(model_path, sess_face)

|

||||

self.face_detector.prepare(0, input_size=(640, 640))

|

||||

|

||||

model_path = os.path.join(assets_dir, 'w600k_r50.onnx')

|

||||

sess_rec = rt.InferenceSession(model_path, self.sess_options, providers=self.providers)

|

||||

print(f"Face Recognizer providers: {sess_rec.get_providers()}")

|

||||

self.rec_app = ArcFaceONNX(model_path, sess_rec)

|

||||

self.rec_app.prepare(0)

|

||||

|

||||

model_path = 'inswapper_128.onnx'

|

||||

model_dir = os.path.join('weights', 'inswapper')

|

||||

os.makedirs(model_dir, exist_ok=True)

|

||||

model_path = os.path.join(model_dir, 'inswapper_128.onnx')

|

||||

|

||||

if not os.path.exists(model_path):

|

||||

print(f"Model {model_path} not found. Downloading from HuggingFace...")

|

||||

url = "https://huggingface.co/ezioruan/inswapper_128.onnx/resolve/main/inswapper_128.onnx"

|

||||

try:

|

||||

self.__download_with_progress(url, model_path)

|

||||

print(f"Downloaded {model_path}")

|

||||

except Exception as e:

|

||||

raise RuntimeError(f"Failed to download {model_path}. Error: {e}")

|

||||

|

||||

sess_swap = rt.InferenceSession(model_path, self.sess_options, providers=self.providers)

|

||||

print(f"Face Swapper providers: {sess_swap.get_providers()}")

|

||||

self.face_swapper = INSwapper(model_path, sess_swap)

|

||||

|

||||

def prepare_faces(self, faces):

|

||||

def prepare_faces(self, faces, disable_similarity=False, multiple_faces_mode=False):

|

||||

self.replacement_faces = []

|

||||

self.disable_similarity = disable_similarity

|

||||

self.multiple_faces_mode = multiple_faces_mode

|

||||

|

||||

for face in faces:

|

||||

#image1 = cv2.imread(face.origin)

|

||||

if "origin" in face:

|

||||

if "destination" not in face or face["destination"] is None:

|

||||

print("Skipping face config: No destination face provided.")

|

||||

continue

|

||||

|

||||

_faces = self.__get_faces(face['destination'], max_num=1)

|

||||

if len(_faces) < 1:

|

||||

raise Exception('No face detected on "Destination face" image')

|

||||

|

||||

if multiple_faces_mode:

|

||||

self.replacement_faces.append((None, _faces[0], 0.0))

|

||||

else:

|

||||

if "origin" in face and face["origin"] is not None and not disable_similarity:

|

||||

face_threshold = face['threshold']

|

||||

bboxes1, kpss1 = self.face_detector.autodetect(face['origin'], max_num=1)

|

||||

if len(kpss1) < 1:

|

||||

@@ -108,55 +157,49 @@ class Refacer:

|

||||

face_threshold = 0

|

||||

self.first_face = True

|

||||

feat_original = None

|

||||

print('No origin image: First face change')

|

||||

#image2 = cv2.imread(face.destination)

|

||||

_faces = self.__get_faces(face['destination'],max_num=1)

|

||||

if len(_faces)<1:

|

||||

raise Exception('No face detected on "Destination face" image')

|

||||

|

||||

self.replacement_faces.append((feat_original, _faces[0], face_threshold))

|

||||

|

||||

def __convert_video(self,video_path,output_video_path):

|

||||

if self.video_has_audio:

|

||||

print("Merging audio with the refaced video...")

|

||||

new_path = output_video_path + str(random.randint(0,999)) + "_c.mp4"

|

||||

#stream = ffmpeg.input(output_video_path)

|

||||

in1 = ffmpeg.input(output_video_path)

|

||||

in2 = ffmpeg.input(video_path)

|

||||

out = ffmpeg.output(in1.video, in2.audio, new_path,video_bitrate=self.ffmpeg_video_bitrate,vcodec=self.ffmpeg_video_encoder)

|

||||

out.run(overwrite_output=True,quiet=True)

|

||||

else:

|

||||

new_path = output_video_path

|

||||

print("The video doesn't have audio, so post-processing is not necessary")

|

||||

|

||||

print(f"The process has finished.\nThe refaced video can be found at {os.path.abspath(new_path)}")

|

||||

return new_path

|

||||

|

||||

def __get_faces(self, frame, max_num=0):

|

||||

|

||||

bboxes, kpss = self.face_detector.detect(frame, max_num=max_num, metric='default')

|

||||

|

||||

if bboxes.shape[0] == 0:

|

||||

return []

|

||||

ret = []

|

||||

for i in range(bboxes.shape[0]):

|

||||

bbox = bboxes[i, 0:4]

|

||||

det_score = bboxes[i, 4]

|

||||

kps = None

|

||||

if kpss is not None:

|

||||

kps = kpss[i]

|

||||

kps = kpss[i] if kpss is not None else None

|

||||

face = Face(bbox=bbox, kps=kps, det_score=det_score)

|

||||

face.embedding = self.rec_app.get(frame, kps)

|

||||

ret.append(face)

|

||||

return ret

|

||||

|

||||

def process_first_face(self, frame):

|

||||

faces = self.__get_faces(frame,max_num=1)

|

||||

if len(faces) != 0:

|

||||

frame = self.face_swapper.get(frame, faces[0], self.replacement_faces[0][1], paste_back=True)

|

||||

faces = self.__get_faces(frame, max_num=0)

|

||||

if not faces:

|

||||

return frame

|

||||

|

||||

if self.disable_similarity:

|

||||

for face in faces:

|

||||

frame = self.face_swapper.get(frame, face, self.replacement_faces[0][1], paste_back=True)

|

||||

return frame

|

||||

|

||||

def process_faces(self, frame):

|

||||

faces = self.__get_faces(frame, max_num=0)

|

||||

if not faces:

|

||||

return frame

|

||||

|

||||

faces = sorted(faces, key=lambda face: face.bbox[0]) # Sort left to right

|

||||

|

||||

if self.multiple_faces_mode:

|

||||

for idx, face in enumerate(faces):

|

||||

if idx >= len(self.replacement_faces):

|

||||

break

|

||||

frame = self.face_swapper.get(frame, face, self.replacement_faces[idx][1], paste_back=True)

|

||||

elif self.disable_similarity:

|

||||

for face in faces:

|

||||

frame = self.face_swapper.get(frame, face, self.replacement_faces[0][1], paste_back=True)

|

||||

else:

|

||||

for rep_face in self.replacement_faces:

|

||||

for i in range(len(faces) - 1, -1, -1):

|

||||

sim = self.rec_app.compute_sim(rep_face[0], faces[i].embedding)

|

||||

@@ -166,13 +209,6 @@ class Refacer:

|

||||

break

|

||||

return frame

|

||||

|

||||

def __check_video_has_audio(self,video_path):

|

||||

self.video_has_audio = False

|

||||

probe = ffmpeg.probe(video_path)

|

||||

audio_stream = next((stream for stream in probe['streams'] if stream['codec_type'] == 'audio'), None)

|

||||

if audio_stream is not None:

|

||||

self.video_has_audio = True

|

||||

|

||||

def reface_group(self, faces, frames, output):

|

||||

with ThreadPoolExecutor(max_workers=self.use_num_cpus) as executor:

|

||||

if self.first_face:

|

||||

@@ -182,15 +218,26 @@ class Refacer:

|

||||

for result in results:

|

||||

output.write(result)

|

||||

|

||||

def reface(self, video_path, faces):

|

||||

def __check_video_has_audio(self, video_path):

|

||||

self.video_has_audio = False

|

||||

probe = ffmpeg.probe(video_path)

|

||||

audio_stream = next((stream for stream in probe['streams'] if stream['codec_type'] == 'audio'), None)

|

||||

if audio_stream is not None:

|

||||

self.video_has_audio = True

|

||||

|

||||

def reface(self, video_path, faces, preview=False, disable_similarity=False, multiple_faces_mode=False):

|

||||

original_name = osp.splitext(osp.basename(video_path))[0]

|

||||

timestamp = str(int(time.time()))

|

||||

filename = f"{original_name}_preview.mp4" if preview else f"{original_name}_{timestamp}.mp4"

|

||||

|

||||

self.__check_video_has_audio(video_path)

|

||||

output_video_path = os.path.join('out',Path(video_path).name)

|

||||

self.prepare_faces(faces)

|

||||

os.makedirs("output", exist_ok=True)

|

||||

output_video_path = os.path.join('output', filename)

|

||||

self.prepare_faces(faces, disable_similarity=disable_similarity, multiple_faces_mode=multiple_faces_mode)

|

||||

self.first_face = False if multiple_faces_mode else (faces[0].get("origin") is None or disable_similarity)

|

||||

|

||||

cap = cv2.VideoCapture(video_path)

|

||||

cap = cv2.VideoCapture(video_path, cv2.CAP_FFMPEG)

|

||||

total_frames = int(cap.get(cv2.CAP_PROP_FRAME_COUNT))

|

||||

print(f"Total frames: {total_frames}")

|

||||

|

||||

fps = cap.get(cv2.CAP_PROP_FPS)

|

||||

frame_width = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))

|

||||

frame_height = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

|

||||

@@ -199,64 +246,135 @@ class Refacer:

|

||||

output = cv2.VideoWriter(output_video_path, fourcc, fps, (frame_width, frame_height))

|

||||

|

||||

frames = []

|

||||

self.k = 1

|

||||

frame_index = 0

|

||||

skip_rate = 10 if preview else 1

|

||||

|

||||

with tqdm(total=total_frames, desc="Extracting frames") as pbar:

|

||||

while cap.isOpened():

|

||||

flag, frame = cap.read()

|

||||

if flag and len(frame)>0:

|

||||

frames.append(frame.copy())

|

||||

pbar.update()

|

||||

else:

|

||||

if not flag:

|

||||

break

|

||||

if (len(frames) > 1000):

|

||||

if frame_index % skip_rate == 0:

|

||||

frames.append(frame)

|

||||

if len(frames) > 300:

|

||||

self.reface_group(faces, frames, output)

|

||||

frames = []

|

||||

gc.collect()

|

||||

frame_index += 1

|

||||

pbar.update()

|

||||

|

||||

cap.release()

|

||||

pbar.close()

|

||||

|

||||

if frames:

|

||||

self.reface_group(faces, frames, output)

|

||||

frames=[]

|

||||

output.release()

|

||||

|

||||

return self.__convert_video(video_path,output_video_path)

|

||||

converted_path = self.__convert_video(video_path, output_video_path, preview=preview)

|

||||

|

||||

if video_path.lower().endswith(".gif"):

|

||||

gif_output_path = converted_path.replace(".mp4", ".gif")

|

||||

self.__generate_gif(converted_path, gif_output_path)

|

||||

return converted_path, gif_output_path

|

||||

|

||||

return converted_path, None

|

||||

|

||||

def __generate_gif(self, video_path, gif_output_path):

|

||||

print(f"Generating GIF at {gif_output_path}")

|

||||

(

|

||||

ffmpeg

|

||||

.input(video_path)

|

||||

.output(gif_output_path, vf='fps=10,scale=512:-1:flags=lanczos', loop=0)

|

||||

.overwrite_output()

|

||||

.run(quiet=True)

|

||||

)

|

||||

|

||||

def __convert_video(self, video_path, output_video_path, preview=False):

|

||||

if self.video_has_audio and not preview:

|

||||

new_path = output_video_path + str(random.randint(0, 999)) + "_c.mp4"

|

||||

in1 = ffmpeg.input(output_video_path)

|

||||

in2 = ffmpeg.input(video_path)

|

||||

out = ffmpeg.output(in1.video, in2.audio, new_path, video_bitrate=self.ffmpeg_video_bitrate, vcodec=self.ffmpeg_video_encoder)

|

||||

out.run(overwrite_output=True, quiet=True)

|

||||

else:

|

||||

new_path = output_video_path

|

||||

print(f"Refaced video saved at: {os.path.abspath(new_path)}")

|

||||

return new_path

|

||||

|

||||

def reface_image(self, image_path, faces, disable_similarity=False, multiple_faces_mode=False):

|

||||

self.prepare_faces(faces, disable_similarity=disable_similarity, multiple_faces_mode=multiple_faces_mode)

|

||||

self.first_face = False if multiple_faces_mode else (faces[0].get("origin") is None or disable_similarity)

|

||||

|

||||

bgr_image = cv2.imread(image_path)

|

||||

if bgr_image is None:

|

||||

raise ValueError("Failed to read input image")

|

||||

|

||||

refaced_bgr = self.process_first_face(bgr_image.copy()) if self.first_face else self.process_faces(bgr_image.copy())

|

||||

refaced_rgb = cv2.cvtColor(refaced_bgr, cv2.COLOR_BGR2RGB)

|

||||

pil_img = Image.fromarray(refaced_rgb)

|

||||

os.makedirs("output", exist_ok=True)

|

||||

original_name = osp.splitext(osp.basename(image_path))[0]

|

||||

timestamp = str(int(time.time()))

|

||||

filename = f"{original_name}_{timestamp}.jpg"

|

||||

output_path = os.path.join("output", filename)

|

||||

pil_img.save(output_path, format='JPEG', quality=100, subsampling=0)

|

||||

output_path = enhance_image(output_path)

|

||||

print(f"Saved refaced image to {output_path}")

|

||||

return output_path

|

||||

|

||||

def extract_faces_from_image(self, image_path, max_faces=5):

|

||||

frame = cv2.imread(image_path)

|

||||

if frame is None:

|

||||

raise ValueError("Failed to read input image for face extraction.")

|

||||

|

||||

faces = self.__get_faces(frame, max_num=max_faces)

|

||||

cropped_faces = []

|

||||

|

||||

for face in faces:

|

||||

x1, y1, x2, y2 = map(int, face.bbox)

|

||||

x1 = max(x1, 0)

|

||||

y1 = max(y1, 0)

|

||||

x2 = min(x2, frame.shape[1])

|

||||

y2 = min(y2, frame.shape[0])

|

||||

|

||||

cropped = frame[y1:y2, x1:x2]

|

||||

pil_img = Image.fromarray(cv2.cvtColor(cropped, cv2.COLOR_BGR2RGB))

|

||||

|

||||

temp_file = tempfile.NamedTemporaryFile(delete=False, suffix=".png")

|

||||

pil_img.save(temp_file.name)

|

||||

cropped_faces.append(temp_file.name)

|

||||

|

||||

if len(cropped_faces) >= max_faces:

|

||||

break

|

||||

|

||||

return cropped_faces

|

||||

|

||||

def __try_ffmpeg_encoder(self, vcodec):

|

||||

print(f"Trying FFMPEG {vcodec} encoder")

|

||||

command = ['ffmpeg', '-y', '-f', 'lavfi', '-i', 'testsrc=duration=1:size=1280x720:rate=30', '-vcodec', vcodec, 'testsrc.mp4']

|

||||

try:

|

||||

subprocess.run(command, check=True, capture_output=True).stderr

|

||||

except subprocess.CalledProcessError as e:

|

||||

print(f"FFMPEG {vcodec} encoder doesn't work -> Disabled.")

|

||||

except subprocess.CalledProcessError:

|

||||

return False

|

||||

print(f"FFMPEG {vcodec} encoder works")

|

||||

return True

|

||||

|

||||

def __check_encoders(self):

|

||||

self.ffmpeg_video_encoder = 'libx264'

|

||||

self.ffmpeg_video_bitrate = '0'

|

||||

|

||||

pattern = r"encoders: ([a-zA-Z0-9_]+(?: [a-zA-Z0-9_]+)*)"

|

||||

command = ['ffmpeg', '-codecs', '--list-encoders']

|

||||

commandout = subprocess.run(command, check=True, capture_output=True).stdout

|

||||

result = commandout.decode('utf-8').split('\n')

|

||||

for r in result:

|

||||

if "264" in r:

|

||||

encoders = re.search(pattern, r).group(1).split(' ')

|

||||

encoders = re.search(pattern, r)

|

||||

if encoders:

|

||||

for v_c in Refacer.VIDEO_CODECS:

|

||||

for v_k in encoders:

|

||||

if v_c == v_k:

|

||||

if self.__try_ffmpeg_encoder(v_k):

|

||||

for v_k in encoders.group(1).split(' '):

|

||||

if v_c == v_k and self.__try_ffmpeg_encoder(v_k):

|

||||

self.ffmpeg_video_encoder = v_k

|

||||

self.ffmpeg_video_bitrate = Refacer.VIDEO_CODECS[v_k]

|

||||

print(f"Video codec for FFMPEG: {self.ffmpeg_video_encoder}")

|

||||

return

|

||||

|

||||

VIDEO_CODECS = {

|

||||

'h264_videotoolbox':'0', #osx HW acceleration

|

||||

'h264_nvenc':'0', #NVIDIA HW acceleration

|

||||

#'h264_qsv', #Intel HW acceleration

|

||||

#'h264_vaapi', #Intel HW acceleration

|

||||

#'h264_omx', #HW acceleration

|

||||

'libx264':'0' #No HW acceleration

|

||||

'h264_videotoolbox': '0',

|

||||

'h264_nvenc': '0',

|

||||

'libx264': '0'

|

||||

}

|

||||

|

||||

75

refacer_bulk.py

Normal file

75

refacer_bulk.py

Normal file

@@ -0,0 +1,75 @@

|

||||

# refacer_bulk.py

|

||||

#

|

||||

# Example usage:

|

||||

# python refacer_bulk.py --input_path ./input --dest_face myface.jpg --facetoreplace face1.jpg --threshold 0.3

|

||||

#

|

||||

# Or, to disable similarity check (i.e., just apply the destination face to all detected faces):

|

||||

# python refacer_bulk.py --input_path ./input --dest_face myface.jpg

|

||||

|

||||

import argparse

|

||||

import os

|

||||

import cv2

|

||||

from pathlib import Path

|

||||

from refacer import Refacer

|

||||

from PIL import Image

|

||||

import time

|

||||

import pyfiglet

|

||||

|

||||

def parse_args():

|

||||

parser = argparse.ArgumentParser(description="Bulk Image Refacer")

|

||||

parser.add_argument("--input_path", type=str, required=True, help="Directory containing input images")

|

||||

parser.add_argument("--dest_face", type=str, required=True, help="Path to destination face image")

|

||||

parser.add_argument("--facetoreplace", type=str, default=None, help="Path to face to replace (origin face)")

|

||||

parser.add_argument("--threshold", type=float, default=0.2, help="Similarity threshold (default: 0.2)")

|

||||

parser.add_argument("--force_cpu", action="store_true", help="Force CPU mode")